In my projects i need to design a transactional database and since the beginning i know that it will be the source of an ETL, feeding a Data Warehouse. I add Primary Key (single column or composite) and standardized metadata columns in all the tables. The date-tracking columns: Option 1 […]

Yearly Archives: 2025

All the posts about Fabric Framework are under tag "fabric-framework". After we create the Fabric Workspace, you need to create the objects that will run the automation. Upload folder “one_time_exec” and run pipeline “pl_one_time_exec_master” Diagram This pipeline has to be ran once. It calls notebooks in order: Configuration File You […]

All the posts about Fabric Framework are under tag "fabric-framework". I started working on a Fabric Framework. in few words this is: Diagram Repository You can find an early version of the source code in GitHub. Dataflow The starting point is an hourly scheduled pipeline “pl_master” that: Folders and Files […]

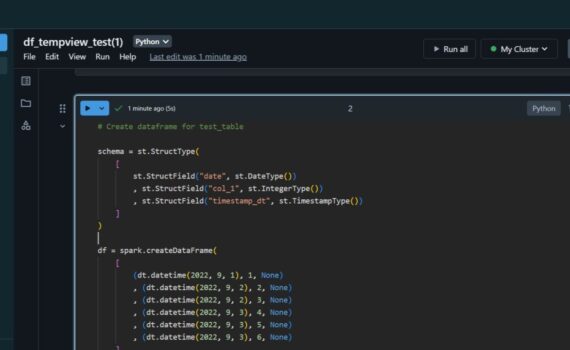

When we insert data into spark dataframe, it continues to live in the source (delta table). In this example i: Spark Dataframe Pandas Dataframe When we select data from delta table into pandas dataframe, the data is transferred to the dataframe. Keep it simple :-)